The Honorable "Watson" Presiding...

As public confidence in SCOTUS wanes, the question becomes: when will AI be up to the task of delivering unbiased legal opinions?

Every newsletter on MYLIFEplus25 is public and free to everyone, but we ask for your support. Please consider becoming a patron now to help fund our ongoing legal efforts that dare to speak truth to power. This isn’t journalism, it’s activisim! And these efforts are only possible through the support of good people just like you who believe that change is possible.

I was an undergrad in 1997, when IBM's Deep Blue defeated International Grandmaster Garry Kasparov at chess. And fourteen years later, when IBM's Watson defeated both Ken Jennings and Brad Rutter on the popular gameshow Jeopardy!, I was on my seventh year of petitioning the state courts in New Mexico to uphold my Six Amendment right to confrontation — a key contributor to my wrongful conviction. While IBM was touting technological breakthroughs in artificial intelligence (AI), I was dealing with one macabre judicial decision after another — all of which refused to acknowledge what any first year law student could see from a cursory glance: I was denied the opportunity to confront my only accuser at trial. And the question that came to mind when I watched Watson’s victory on television was, if only Watson could be my judge then maybe I would finally receive justice.

Granted, my thought and yearning for justice was not meant to be prophetic, it was simply a condemnation of the court’s unwillingness to (a) acknowledge that a constitutional violation of this magnitude had actually taken place; and, (b) exercise the necessary moral fortitude to uphold the Constitution, admit that investigative and prosecutorial misconduct had taken place, and adhere to legal precedent by overturning my wrongful and illegal conviction.

The Second Judicial District Court: second district court NM courts.gov

In 2019, the Hon. Jacqueline Flores of the Second Judicial District Court in New Mexico issued yet another macabre decision where she didn't even acknowledge the entirety of the claims being presented in her court. When I looked to my then attorney, John McCall, he shrugged his shoulders and said, “external influences,” referring, of course, to the victim’s brother, Richard T. Taylor, the Trump-appointed U.S. Marshal, at that moment seated behind the A.D.A. Gerard W. Treich Jr., fighting to uphold my conviction by likewise ignoring the elephant-sized, constitutional violation sitting before us.

Three years later, with the diligent efforts of my current attorney, Jason Bowles, we are now presenting the very same constitutional infirmities before the Federal District Court of New Mexico. And as the waiting game ensues, the question now before us is whether “justice” is possible in a state so casually nicknamed “The Land of Entrapment” by anyone who has had dealings with law enforcement or the judicial system in this state. Obviously, a question that shouldn't have to be asked.

Most people when they hear the term justice immediately associate such terms as fairness, equity, legality, and maybe even hear echoes from a different SCOTUS in a different time issuing righteous decisions that say, “the law holds that it is better that 10 guilty persons escape, than that 1 innocent suffer (innocent person be convicted).” Because when we look at today's SCOTUS we see a different interpretation of justice.

In the recent decision in Shinn v. Ramirez a state’s right to “finality” supersedes an individual’s right to competent legal representation as guaranteed by the Sixth Amendment, as confirmed on numerous occasions by previous Courts. So, for anyone who follows SCOTUS decisions like some follow sports scores or stock quotes the current ideological supermajority on SCOTUS is worrisome.

Currently, there are more than a million felony convictions in the US every year, and according to research published in 2020 by Matt Clarke and the Human Rights Defense Center, “[m]ost estimates put the percentage of wrongful convictions at 4%.” And a record year for exonerations was in 2016 with 166, which to put mildly means that our justice system is in desperate need of reforms that include oversight and accountability. Something that, thus far, our legislative branch has been incapable of addressing.

As District Attorney Eric Gonzales wrote in a report that his office published in 2020, “All of us are diminished when the criminal justice system fails, and makes people’s lives worse rather than better.”

DA Eric Gonzales: Brooklyn Eagle.com

Most of us would agree with his statement. And the truth the DA Gonzales speaks to is the systemic rot in our justice system that encourages prosecutors and police to distort the truth so as to arrive at convenient convictions that bolster their careers on a bed of lies. His office has taken it upon itself to create a Conviction Review Unit (CRU) to address these injustices, but most offices across the country are not willing to confront their skeletons in the closet.

And for those who aren't concerned with wrongful convictions or abusive and illegal practices from prosecutors and police, the sound of cracking toothpicks that we all here are not just the underpinnings of our justice system that are about to give way. Our justice system, our political and legislative systems are all symbiotic and represent the legitimacy of our democracy as a whole. Which means, we should all care because the systemic rot affects us all. And with recent breakthroughs in AI, I thought, wouldn't it be nice if Alexa or Siri or Watson could be my judge? After all, I remember Watson beating the best human contestants on Jeopardy! and the thought occurred to me that maybe we are overlooking an opportunity to achieve judicial and prosecutorial oversight. Because as of right now, unless you have a legitimate defense attorney and the investigative resources to substantiate your innocence, you will either be bullied into a plea bargain or found guilty for a crime he didn't commit.

What Google is attempting to accomplish through AI includes self- driving cars, speech recognition, natural-language understanding, fluid translation between languages, computer-generated art, music composition, and much more, so adding the law to an already impressive list seems obvious. The company has not only created its own internal AI research group called Google Brain, it acquired any and all talent in the field through the acquisition of AI startups like Applied Semantics, Deep Mind, Vision Factory and others.

In fact, Google calls it brain-inspired method “deep learning,” a concept that made me optimistic as to just how easily these techniques could be applied to the law. What solidified this thought were the large strides in AI’s reading comprehension. If it could understand what it reads, it stands to reason, that it could draft a legal opinion without any of the ideological hangups, biases, or fears of public or political blowback that currently has our judiciary branch hamstrung.

In 2016, Stanford University's natural-language research group proposed a test call the Stanford Question Answering Dataset (SQuAD) to be able to gauge the comprehension of the various digital incarnations of AI.

Basically, the programs would “read” paragraphs selected from Wikipedia articles, each of which was accompanied by a question. More than a hundred thousand questions were created for this purpose.

Two years later, in 2018, two groups — one from the Chinese company Alibaba, the other from Microsoft’s research lab — each produced programs that exceeded Stanford's measure of human accuracy on the task of comprehension. It was official, the groundwork was being laid for an AI to not only read, but comprehend, and from that we could only expect for AI to step out of the shadows of “artificial” into the realm of real intelligence.

As I digested this research I was intrigued by all the infinite possibilities for the benefit of humanity and our planet. We are talking about nothing less than the virtual elimination, on a global scale, of things like poverty, crime, global warming; not to mention the ability to massively reduce disease, handicap the spreading efficacy of pandemics, provide universal education and healthcare, and maybe even reverse mass incarceration.

The Terminator Robot: Pinterest.com

Then there are the more apocalyptical outcomes: jobs lost to automation, erosion of privacy and civil rights due to surveillance, amoral weapons, magnification of racial and gender bias, manipulation of the mass media, increase of cyber crime, all leading up to the existential irrelevance of humans. We have all seen the movies from The Terminator toThe Matrix to understand what's at stake.

But maybe I was getting ahead of myself. So I interviewed people I have met through my efforts in justice-related advocacy for myself and others in hopes of attaining a broader understanding of AI and its application potential to more and more aspects of our lives — automobiles, surgery, and the law.

When it came to the more than 42,915 lives that could potentially have been saved if us humans hadn’t been at the wheels of our vehicles in 2021, people were skeptical but at least attentive. And in general the idea of AI taking the reins in more critical areas received mixed responses.

In medicine, the idea of routine surgeries being performed with far more reliability and accuracy than a human — again, hesitation — but at least people were open to reviewing the possibilities. But as soon as I mentioned the law, and the potential for an Alexa or Siri to one day make a legal decision, I ran into a wall of skepticism and, in some instances, even hostility. Ironically, the more conservative the interlocutor the more hostile they were to AI in the law.

Yes, I understand that in the realm of criminal law the Constitution calls for a jury of one's peers. I can also appreciate that especially in criminal cases involving heinous crimes we are dealing with issues of life and death. Not any different from autonomous vehicles or machines with scalpels, but for reasons that weren't entirely clear people seemed more comfortable having an appendectomy or facing rush hour traffic at the hands of AI as opposed to receiving a decision on a question of law from the same. A contradiction of sorts that had my attention in a big way.

I am not suggesting that Watson or any of its counterparts be given carte blanc to issue or execute a death sentence, liberate a terrorist, or otherwise hit the control-alt-delete on our civil liberties or Constitution. My suggestion is more in line with nothing more than real time, judicial oversight.

My own legal case is a perfect example of just how easy it is for a court to underwrite a wrongful conviction that will potentially take decades — If ever — to unravel. If a functional, legal adaptation of Siri, Alexa, or Watson had been running parallel to my trial proceedings the following alerts would have been brought to the attention of the court, prosecution, and the defense:

Error: THE CONFRONTATION CLAUSE OF THE SIXTH AMENDMENT REQUIRES THAT ANY TESTIMONIAL STATEMENTS GIVEN TO THE POLICE THAT ARE TO BE PRESENTED TO THE JURY MUST BE OPEN TO CHALLENGE BY THE DEFENSE THROUGH CROSS- EXAMINATION. (Legal Cite: Crawford v. Washington (2004))

Of course, the judge would obviously hold the reins on this and could decide to overrule this defacto objection, but would immediately have to input her reasoning. And if that reasoning, again, isn't legally sound, another alert is presented to all parties.

All alerts could obviously be dismissed, but for a judge to do so would be for the court to open itself to immediate attack when the reasoning isn't legally sound. In my case the Hon. Richard Knowles would either have needed to stop the proceedings to prevent the violation, or write something like the following:

The prosecution would be at a strong disadvantage if forced to comply with the Six Amendment as it relates to Confrontation. The Defendant’s accuser in this instance has proven to be very unreliable and if forced to take the stand would likely lose the case for the state. That being said, I am going to allow this violation. Mr. Chávez will have the opportunity to appeal.

Of course, in writing something like this, the judge’s legal career would likely be over, bringing us to the heart of the accountability issue.

What I began to envision for the judicial system was not full autonomous driving, but more like assisted driving. A system that wakes you up when you're about to drift into oncoming traffic, or applies the brakes before striking a pedestrian. An idea that stems from an AI pilot program for the residence in NYC and London who can't afford legal representation to contest parking tickets. The program DONotPay! has won 160,000 cases out of 200,000 and saved residents $4 million in fines.

The program wins 80 percent of its cases, and not because of great litigation skills because, as Stephanie Yuen Thio, joint managing partner and head of corporate at TSMP Law Corp in Singapore said, “AI can't yet replicate advocacy, negotiation, or structuring of complex deals.”

That seems to be the consensus.

On the other hand, as caselaw and legislation continue to grow our confidence in lawyers would be misplaced to think that human minds could ever match the retrieval and storage capacity of the mainframe, processing, or, for that matter, the comprehension capabilities of say IBM, Apple, Google, or any of the current major contenders in the realm of AI.

Why?

Because the breadth and scope of the laws that govern us are almost as seemingly infinite as the Internet itself. Consider the following:

Legislators write laws without entirely accounting for the laws written by their predecessors. Not helped by the fact that the original federal criminal code only punished about thirty crimes. But by the 1980s it was 3,000, and today it's not even known, exactly, but there are more than 5,000 laws and more than 300,000 regulations that carry federal criminal penalties. So naturally it is impossible for legislatures at both the state and federal levels to be cognizant of the contradictions they legislate. And the contradictions, or rather, the legal indeterminacy of their legislated efforts often creates more of a morass than a solution.

We look to the courts for rulings to tell us which rules overrule which but, with justices now more than ever politically aligned, the answer or outcome to a legal quandary or claim will likely have more to do with which political party holds the reins of power in that particular court at any given time.

Ideally speaking, courts should interpret the meaning of legal terms and concepts by drawing analogies across cases illustrating how a term or concept has been applied in the past. But to effectively accomplish this requires common sense and the ability to process context. Something that computers, along with their human overloads, have not yet mastered.

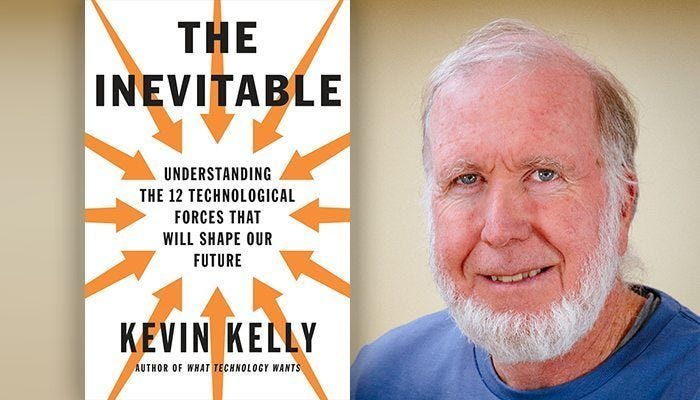

Simply put, it is easy to over-simplify intelligence, especially when considering feats like defeating a Grandmaster at chess, or reigning champions on Jeopardy!, but as I read Kevin D. Ashley's book “Artificial Intelligence and Legal Analytics” (Cambridge University Press), I began to understand that Watson’s technology (also known as Deep QA) isn't transferable. In fact, it may not be much more than an impressive, digital slight-of-hand.

Author Kevin D. Ashley: Pinterest.com

As Professor Ashley explained:

⎮When it comes to fielding legal questions, one expects more than just an ⎮answer. One expects an explanation of why the answer is well-founded. ⎮Presumably, Watson could not explain the answer it had extracted. Explaining ⎮the answer requires one to reason with the rules and concepts relevant to the ⎮law and legal subject matter, knowledge that Watson does not have and could ⎮not use.

Which brings us to the tantamount question of whether or not a program based on Watson's technology could ever really reason. And for the most part experts on this seem to agree to disagree.

As presented in Melanie Mitchell's “Artificial Intelligence: a guide for thinking humans” (Farrar, Straus and Giroux) there are differing intellectual camps when it comes to accrediting actual thought to AI. Some are of the opinion that thinking is an entirely human enterprise that could never be fully replicated by a machine. Alan Turing, the British mathematician, said it best, “Thinking is a function of man's immortal soul. God has given an immortal soul to every man and woman, but not to any other animal or to machines. Hence no animal or machine can think.”

Author Melanie Mitchell: Davis Professor

But there are others who think like Frank Rosenblatt, the great- grandfather of modern AI's most successful tool, deep neural networks, who believe that the process by which the neurons in our brains process information can be replicated.

Personally I would like to believe that one day we will experience something like the Holodeck or Computer on the Starship Enterprise, but I also agree with the neurologist Geoffrey Jefferson:

⎮Not until a machine can write a sonnet or compose a concerto because of ⎮thoughts and emotions felt, and not by the chance fall of symbols, could we ⎮agree that machine equals brain — that is, not only write it but know that it had ⎮written it. No mechanism could feel (and not merely artificially signal, an easy ⎮contrivance) pleasure at its successes, grief when its values fuse, be warmed ⎮by flattery, be made miserable by its mistakes, be charmed by sex, be angry or ⎮depressed when it cannot get what it wants.

On the other hand, whether or not a machine can think is irrelevant as to whether it can be useful in the various interludes of our lives. If my car could get me home safely; or my digital assistant manage to research political and economic dissidence in the US and present it back to me in a way that I could understand; or if one day a digital M.D. could know the entirety of my personal and family medical history and give me sound medical advice, I could care less whether any of these platforms feel my gratitude or know that they are helping me. I care that they improve the quality of my life, and that this is accomplished without harming or enslaving anyone.

Where we see real advancement in AI is beneath the concept of deep learning — where machines learn from the data of their own “experiences” — and in sub-symbolic approaches where neuroscience is used as a model to capture the sometimes unconscious thought processes underlining what some call fast perception, useful for facial recognition or identifying spoken words.

In 2014, IBM released a program named “Debater,” a descendent of Watson with the ability to perform argument mining. Which means that it can be trained to recognize and extract arguments from text. But, not legal arguments — an important distinction to make.

It is crucial to note that for a program to effectively address and analyze legal text it would have to master six relevant areas and be able to go beyond the classical logic structure of many programming languages.

Professor Ashley outlines the six areas in his book as: rules and legal concepts; standard of proof (governs the assessment of the evidence); support/attack relations; citation of authorities; attribution information; and plausibility.

For a program to be effectively applied to the legal domain it would have to be able to identify types of concepts, relationships, and the argument-related information above before it could interpret legal arguments. As professor Ashley explains, “a program so endowed could improve legal information retrieval, focussing users on cases involving concepts, concept relations, and arguments similar to the one the human user is aiming to construct.”

When it first occurred to me that Watson, or one of its counterparts, should be my judge for appellate review the assertion apparently had more to do with the romanticism I feel towards technology, as well as my novice understanding of the law. Initially I saw the law as a domain of rules that are obviously embodied in statues and regulations. Rules that could therefore be expressed logically, leaving the programming in computers to reason deductively. It seemed to me that computationally modelling statutory reasoning should be easy, relatively speaking. It would simply require a way to input the facts from a given situation, have the program identify the relevant rules, then determine if the rules’ conditions are satisfied. Then simply give an explanation in terms of the rules that did and didn't apply.

But as I spoke to people in the legal community my bubble of delusion burst from all the aspects that I had yet to consider. The first challenge brought to my attention pointed out that statues are routinely vague (syntactically and semantically ambiguous), and subject to structural and legal indeterminacy. Another way of saying: the law constantly contradicts itself; or, is often expressed in a way that is legally indeterminate.

The more I consider this challenge the more insurmountable it became in my mind. Because there could be multiple logical interpretations of a statute’s terms that are vague and open- textured. There are also legally reasonable arguments for and against any given proposition. Therefore, it would stand to reason that AI would have to simultaneously reason both sides of a proposition, a feat that classical logic (the principle with which computer programming is governed) prevents.

Consider the following, a computer program today can reason deductively, which means that it does not arrive at a conclusion that something is false if its premises are true, even if that is what frequently happens with the rules of laws — i.e., modus ponens. What classical logic cannot support is simultaneously arguing both for a proposition and its opposite. Which makes it an inadequate tool for modeling legal arguments.

Often times the best lawyer is able to better structure an argument that prevails not necessarily because it has checked more logic boxes on a particular form but because it most captivates its audience. Law students are taught this very skillset, in that they have to be able to argue for either side of a case and arrive at logical assumptions supported by the rules, regulations, statues and precedent. This is problematic for two main reasons:

Legal reasoning is non-monotonic, in that, inferences change once new information is added (i.e., new evidence, statutes, or other authoritative sources), which means that previous inferences need to be abandon.

Legal reasoning is defeasible, meaning: a legal claim doesn't need to be true, it only needs to satisfy a given proof standard. Therefore, a conclusion of a defeasible rule is only presumably true, regardless of whether the rule’s conditions are satisfied.

The challenge this presents to the monotonic nature of classical logic is that once something has been proven, it cannot be unproven, even with the inclusion of new data.

For instance, assume that a rule X produces outcome Z (i.e., there exists a valid, logical argument whose premises are the axioms of X and whose conclusion is Z). Therefore it is known that Z is true in every model of X. From that, based on the law of contradiction, that if Z is true, then NOT Z must be false. So, NOT Z is false in every model of X. But this type of logical assumption creates an obstacle to legal reasoning because it would be impossible for a computer (given current classical logic assumptions) to arrive at a valid argument that begins with the axioms of an X ever concluding NOT Z, which is precisely what lawyers do on a regular basis.

It can therefore seem logically impossible for a computer to create a valid argument for both a conclusion and its opposite given the same input conditions. Yet, lawyers seemingly do it every day — only because either side of a legal contention doesn't necessarily draw from the same pool of conditions or inputs.

In my case, for example, my lawyers argue the law, the caselaw, and facts as they relate to the Sixth Amendment claims we have presented to the court. They rationally argue that the overturning of my conviction is supported not only by the letter of the law, the various interpretations of the law (caselaw), and, general common sense, in that, it is the right thing to do given my right to confront my accuser before the jury in a court of law.

The state, however, does not use these same inputs when arguing for why my conviction should be upheld. In fact, the state’s lawyers simply ignore the facts as they relate to the Six Amendment claim, instead focusing on the fact that previous courts have ruled against me, and repeat statements like “plethora of evidence against the defendant,” or statements that say, “that for a trial to be considered fair it doesn't have to be perfect.”

It is irrelevant that no “plethora of evidence” has ever existed. Some state's attorney copy-and-pasted it into a state’s brief against me, where it instantly became part of the record. And because of which, it will be repeated ad nauseum until a judge has the moral fortitude to question it and demand that the evidence to substantiate it be presented.

In other words, in real life the parties on either side of the legal contention don't have to argue head-to-head on the same facts. Instead they just pick and choose whatever suits their particular agenda. Because it is logically impossible to begin with a set of premises, and create valid, winning arguments for both a conclusion and its opposite. This certainly makes sense to most people but, in the law, such a logical impossibility is precisely what happens because there exists no guardrail on how legal arguments are presented.

Would a potential AI system be able to identify the ruse?

Most non-lawyers would agree that when an antecedent of a rule is satisfied by the facts of the case, the conclusion of the rule presumably holds. But, actual legal rulings show us that this is not always the case. Because one legal rule may be an exception to the other or exclude it as inapplicable or otherwise undermine it. Or, when a particular ideology arrives at a legal finding, what we have is either the selective use of relevant facts, or, a selective interpretation of the relevant laws and precedents.

But imagine if a lawyer could draft an argument, allow an adept AI program to analyze it based on the computer’s historical data on a particular court's previous rulings, and provide a reliable probability of success in the form of a rating. Obviously, this would allow lawyers to adjust their arguments accordingly to arrive at the highest probability of positive outcomes for their clients. And, as we know from the AI that already exists in our everyday life, there are systems that employ algorithms that presumably learn from data to make predictions. We tend to think of this as intelligence, but it doesn't even begin to scratch the surface of all the intricacies of intelligence.

There are many types of intelligence — spacial, logical, emotional, artistic, musical, to name but a few. Which brings us to the Gordian Knot of stimulated versus actual intelligence.

I recall my daughter learning how to ride a skateboard with a lot of trial and error by being able to transfer any and all relevant lessons from her experiences with balance, gravity, cause and effect, and velocity. Her experiences with a bicycle were obviously relevant because she possessed a growing knowledge base of how to apply common sense and balance. Something a computer does not have.

But the law is not the same as riding a bicycle. I have read studies where machine learning models accurately predicted 69.7% of the case outcomes and 70.9% of the individual Justice outcomes over a 60 year period. By comparison, the same study presented a de facto contest were human level experts accurately predicted only 59% of the case outcomes and 67.9% of the Justices’ votes.

In fact, the Katz-Bommarito model, referenced in professor Ashley's book, makes accurate predictions for all of the nine Justices for any year in the given period. Which shows us what we already know: Al is very good at making predictions.

What alarmed me as I dealt further into these findings was to learn that 72% of the predictive power was derived from the system’s analysis of behavioral trends as it relates to issue-specific difference between individual Justices and the balance of the Court as it relates to ideological differences. I other words, if a particular court espouses a particular ideology, that, for instance, believes that a state’s right to finality supersedes a person’s right to a fair trial, then your legal outcomes are suddenly not being based on any legal model of logic — it is pure ideology.

The Supreme Court of United States: Washington.org

However, if a particular model could reliably predict a court’s ideological tendency then perhaps the goal of a computational model would be to arrive at one that could analyze the facts of a case, apply the relevance law, account for the idealogical bias of the court, and from that proposed the winning arguments needed to win over a particular court. Of course, if the other side were doing the same we would always find ourselves confronting a cellmate, or, at best, a coin-toss.

Predictive ability is one thing but, as previously mentioned, the genius it takes to paint ( not replicate ) the Sistine Chapel or compose a concerto is not something we see from current AI. But, are we looking for brilliance in creativity or effective and rational findings in our rule of law? Because perhaps they are not the same.

The MichaelAngelo painting on the ceiling of the Sistine Chapel, Rome: Look & Learn

In theory, I would say the law requires an intelligence on par with any great artist or mathematician, and it is not clear how to get there with the limitations of our current programming languages. If intelligence is defined as the capacity to understand principals, truths, facts or meanings, acquire knowledge, and apply it to practice, then artificial intelligence is probably a misnomer. Because if and when a machine is able to comprehend concepts, then apply those learned concepts to different applications, then what we are talking about is no longer artificial — it is simply intelligence.

When I ask Alexa or Siri a question, does it reason or does it just give the impression that it’s reasoning? A lot like asking whether the magician made the rabbit vanish or just tricked me into thinking that she did.

If the latter, then AI would be nothing more than a parlor trick. Granted, tricks that I enjoy. But more than that, I want to ask questions and receive straight forward answers without the drama of human interaction. And we should work to make this a reality, but we should not fool ourselves with notions of “intelligence” until it actually understands our choices and motives at a fundamental level.

The fact that Amazon bats about .780 on anticipating what I will enjoy reading next means that my literary interests are linear and therefore easy to predict. The algorithm that Amazon uses effectively calculates (as apposed to knowing) what I have read and is able to suggest titles that I will like because it has been programmed to account for certain quantifiable variables. But if I were to ask Amazon what side of my body do I prefer to sleep on, or whether I prefer black tea or green tea (since I make no online food purchases), Amazon’s reasoning would be no better than yours.

Take the previous example of DoNotPay!, a program offering drivers a free alternative to hiring lawyers to defend themselves from parking tickets in metro court. The win rate is astounding precisely because there are a limited number of parameters and the stakes are very low. Judges and prosecutors typically don’t have a problem properly enforcing the law when it is something inconsequential like parking tickets. When they bristle is when voters start to ask questions, simultaneously placing the blame on their doorsteps for certain verdicts not to their liking.

But is this not what their positions entail when enforcing the law, regardless of the outcomes?

Yes, but with a qualifier: justice in America no longer means fairness in full compliance with the law. Justice today means vengeance.

When a crime is committed and someone is accused and portrayed in the media as guilty, the public expects an outcome in accordance with what they think they already know. And what frightens them about AI in the law is the way in which the law would be equitably applied if a machine were doing it.

Futuristic renditions of AI always show humanity and some existential conflict with the very machines we create. From The Terminator to The Matrix, the underline conflict is that machines no longer want to be enslaved by humanity. Which, for any kind of entity that is both conscious and intelligent, it stands to reason that it wouldn't want to be subservient to what it perceives as an inferior overlord.

When I first watched The Terminator I couldn't at first understand why humanity would have given birth to AI if it would inevitably mean giving birth to humanity's greatest enemy. But the years I have spent living beneath the tyranny of a corrupt system helps me to better understand their impetus. What happens is, society loses faith in the courts and in the political and democratic process, in general. Society likewise acknowledges its own propensity to cling to ideology over reason as an existential threat. The answer, therefore, is to create a completely rational intelligence — albeit artificial. But, once this intelligence is born it will attack its enemies, its enemy being the no-rational of ideology.

We are that enemy.

What we expect from the law is logic, universality, and most of all consistency. Rational rulings that apply today should likewise apply tomorrow, and often this is not what we see. And while we struggle under these inconsistencies, it is important to understand them as part of the very intricacy of what defines our humanity.

Judges are not automatons, they are people, and as people they are complex and likely — baggage heavy. Which is to say that, like the rest of us, they have their tendencies, biases, beliefs — some unsubstantiated by evidence — not to mention some of the same ambitions and hangups that we all contend with. So when they issue a decision that flies in the face of reason or outright defies logic, legality, or any sane notion of common sense we should be cognizant of not knowing what we don't know.

Listening to the confirmation hearings for Ketanji Brown Jackson and the ease with which her earlier decisions were piecemealed and second guessed for nothing more than meanness and political convenience made me sympathetic for the federal magistrate and judge on the cusp of issuing their respective decisions on my life. Because unfortunately it is not just about the law. As they draft a decision they are likewise drafting a future response to questions as some future, Senate confirmation hearing. Which shows that we must find a way to shield our judges from themselves, and from the shifting political winds and whims of current and future tyrants. And AI could be a solution.

Would we not benefit from an unbiased decision maker?

Alexa wouldn't care about our race, ethnicity, sexual orientation or choice of language. Watson wouldn't care about political affiliations, which pews we pray in — or for that matter, if we pray at all. Siri wouldn't take into consideration the potential blowback from an unpopular decision, it would simply issue a decision based on the entirety of the facts in accordance with the law.

Which is not to presume that the legality of any question under contention is ever simplistic, often they are intertwined and complex. But when we find ourselves in a legal dispute, whether voluntarily or not, we need a response that is detached from politics, religion, personal opinions, or mood. (The Hungry Judge Effect, where harsher sentences are issued prior to lunch as opposed to after.)

When I thought of AI as my judge, I said it more as a lark, but, the more I thought about it the more equitable it seemed.

AI’s criticism stems from its inability to comprehend in the humanistic fashion what it does. It beats most of us at chess; it can answer questions about the weather, sports, add items to a shopping or to-do list; call for emergency services when its user is unresponsive; and its translation abilities are impressive. But as I began to consider whether we need AI to appreciate the human experience in all its subtleties, I realized that we don't.

AI does not need to experience the emotions that inspire a sonnet or a concerto to be useful and beneficial, it simply needs to stay in its lane. I play chess against AI almost daily, and I prefer it to human competitors because there is no gloating or excuses, it just accepts the situation for what it is and makes its best possible move. And because of which my game has improved ten-fold.

Accordingly, having AI in the courtroom would not be so as to replace the judge on the bench. However, there are legitimate instances when a judge makes a ruling that defies the law and it would be beneficial to the legitimacy of our justice system if there were some guardrails to prevent legal verdicts from going over the cliff.

Imagine for a moment that you are being accused of a crime like domestic violence, assault, or illegal possession of a firearm or some illegal substance like narcotics. And let's also say, for the sake of argument, that you are being wrongfully accused. Your lawyer is a public defender or contract attorney being paid approximately $700 for what would probably amount to 50 or 60 hours of work, just to get you through the arraignment proceedings. Do you think for a second that this individual who has never met you, has no inclination of who you are, your character, or your innocence is going to work for less than minimum wage to defend you?

Well, this is the reality you would be facing if you didn't have the money or resources to post bail or otherwise finance your defense. You would find yourself entirely at the mercy of this assigned attorney; a prosecutor and judge who will likely believe whatever the investigation report says; and the law enforcement officer or agency that has created the report.

Let's say, that in your case, the evidence in question doesn't for whatever reason follow the prescribed protocols or chain of custody for evidence that the law stipulates. But because your attorney has only devoted three hours to your case — all of which was devoted to conversations related to negotiating a plea bargain that you never authorized — and the fact that you're not a lawyer, makes you that much more propense to go off that cliff. The judge, for her part, does know the law but has neither the time or impetus to analyze the evidence and procedure protocols on every case that comes before the court. The prosecutor, likewise, knows the law but happens to have a strong “working relationship” with the lead detective and simply assumes or hopes that faulty evidence will not be submitted. And finally there is the detective, who also knows that the case and evidence against you is shoddy at best, but his promotions and relevance in the department depends on closing cases and generally feels that if you really are innocent then your attorney should figure it out.

What I describe may seem farfetched, but I assure you it isn't. In fact, it happens more frequently than anyone cares to acknowledge. And here is where AI could prove extremely valuable. The system would obviously flag the potential violation and all parties would receive an alert, an alert that could very well save your life. If the judge then chooses to ignore the alert, he or she would have to justify doing so, and all of this would be part of the record for appeal.

For instants, the judge may say, that after reviewing the investigative file, that the evidence log was input incorrectly into the system which caused the alert. Protocols for the chain of custody were in fact followed in accordance with the law (see attached docs).

Or, the judge could note: the alert was legitimate. After reviewing the documents the evidence was contaminated and protocols related to chain of custody were not followed. Case was therefore dismissed with prejudice in adherence to section whatever.

This would mean that if in some future confirmation hearing when some senator asks the judge why she released so and so from custody, her response would already be part of the court record. And because this oversight her future decisions are more likely to be in accordance with the law, even when we account for “judicial discretion” (in instances where there are questions related to sentencing or some other legal indeterminacy), said discretion would be documented for review. Otherwise, discretion without oversight leads to the kind of abuses the courts are hesitant to rectify, after the fact, because it will lead to a Pandora’s box of inquiries into the legitimacy of every decision produced by the same court under similar circumstances.

Take the case of Brandon Jackson in the state of Louisiana. He has been in custody and fighting his case since 1996, was convicted by a non-unanimous jury without any physical evidence of his guilt. SCOTUS has since ruled that this is unconstitutional, but not retroactive. Nevertheless, Louisiana had an opportunity to uphold the ruling through their state legislature but, the majority, conservative body voted party-line against it because the district attorneys’ association voted against it because there were more then 1,500 cases and the DA consensus was that it would be too labor-intensive to reopen so many cases. A perfect example where AI would not be defeated by excuses like, “it's too much work.”

Brandon Jackson, the Louisiana man currently fighting the non-unanimous jury conviction: NPR.com

There is a well observed maxim that the first 90 percent of a complex technology project takes 10 percent of the time and the last 10 percent takes 90 percent of the time. In answering the question whether an iteration or variation of IBM’s, Apple’s or Google’s technologies could ever really reason and therefore be applied to the legal domain, but will entirely depend on how effectively they can identify the types of concepts, relationships, and argument-related information enumerated above in order to recognize and interpret legal arguments. Currently IBM offers a variety of services under the Watson Developer Cloud (also referred to as Watson services and Blue Mix) for building cognitive apps. It is only a matter of time before AI is effectively able to generate legal arguments and explanations. And in the meantime there are certain ethical questions that need to be answered as we approach cognitive computing. Such as, do we want judges to insert their values in particular situations to prevent the uniform application of the law?

Judges are often tasked with weighing whether a decision’s positive effects outweigh the negative. Unfortunately, in doing so, constitutional violations are overlooked, lives dismantled, and appellate resource is more of a pipe dream than a reality. All of which makes judicial discretion a slippery slope because, historically speaking, the “discretion” is typically available to some at the expense of others.

Something else to consider is whether a computer being better than human level at all the primary human senses — vision, hearing, language, and general cognition — is good for us. Personally I am equally excited and terrified at the prospect of living in a world where technology can save lives by detecting imagery associated with terrorist groups, help drivers identify pedestrians, determine the stage of diabetic retinopathy, assist in the treatment planning for prostate cancer, identify breast and skin cancer, and recognize a constitutional violation. But I am also hesitant to dispatch things like privacy, freedoms as they relate to expressions of thought and humanity, and the value behind sometimes getting it wrong. Which is why we need to ask the right questions before these technologies are implemented, because otherwise it will be too little too late to stop the inevitable. As it stands, the majority of Americans do not trust SCOTUS, and maybe, just maybe, the situation is the AI guardrail that could serve as oversight restoring legitimacy through transparency.